Capturing a moment in a single photograph is a timeless tradition, yet static images often fail to convey the complete atmosphere of a cherished memory. We frequently find ourselves looking at old family portraits or travel snapshots, wishing we could see the wind blowing through the trees or the subtle smile forming on a loved one’s face. This gap between a frozen frame and a living memory creates a sense of detachment that modern creators are eager to bridge with Image to Video AI.

Image to Video AI turns a static photo into short animated video content by adding motion, camera movement, and scene depth through generative models. It helps creators transform still images into more engaging visual stories for social media, marketing, personal memories, and digital storytelling without traditional filming or heavy editing.

Table of Contents

- How Image to Video AI Is Changing Visual Storytelling

- A Curated Selection Of Leading Platforms For Image Animation

- The Core Implementation Process For Generating Dynamic Visual Content

- Comparative Analysis Of Modern Generative Video Performance Characteristics

- Expanding The Horizons Of Personal And Professional Storytelling

- Technical Nuances Of The Current Generation Video Models

How Image to Video AI Is Changing Visual Storytelling

By utilizing Image to Video AI, individuals can now breathe life into these still moments, transforming them into cinematic narratives that resonate on a much deeper level than a simple gallery of photos ever could.

The shift from static to dynamic content is not merely a trend but a fundamental change in how we consume digital media. In my observations, the ability to add motion to a subject without complex filming equipment or expensive editing software is democratizing video production.

While traditional videography requires hours of setup and post-production, generative AI allows for the synthesis of motion in a matter of minutes. This technology interprets the depth and context of a 2D image, predicting how shadows should shift and how textures should move.

It is a fascinating intersection of mathematics and art that provides a new canvas for storytellers who previously felt limited by their technical skills or hardware constraints.

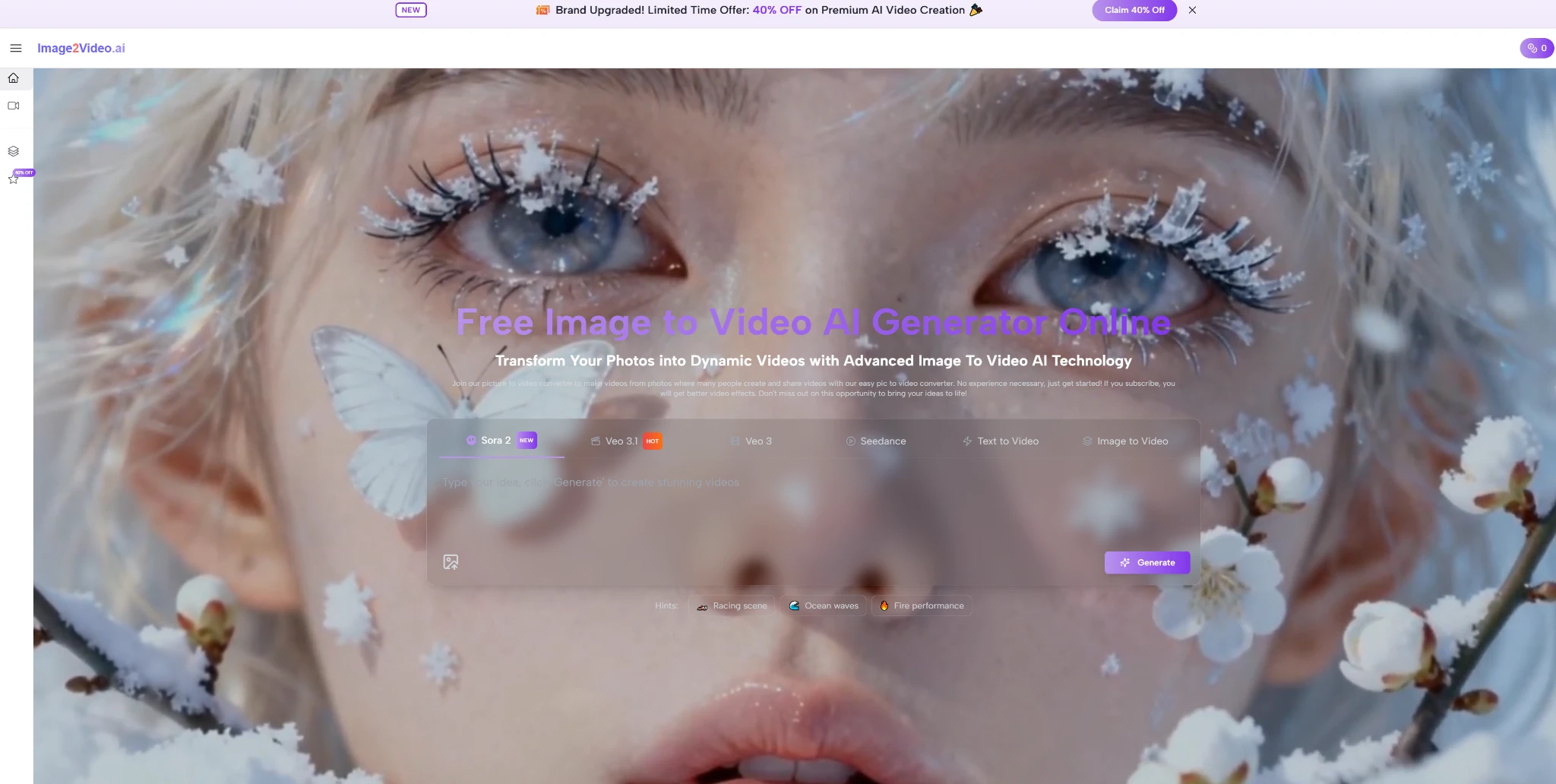

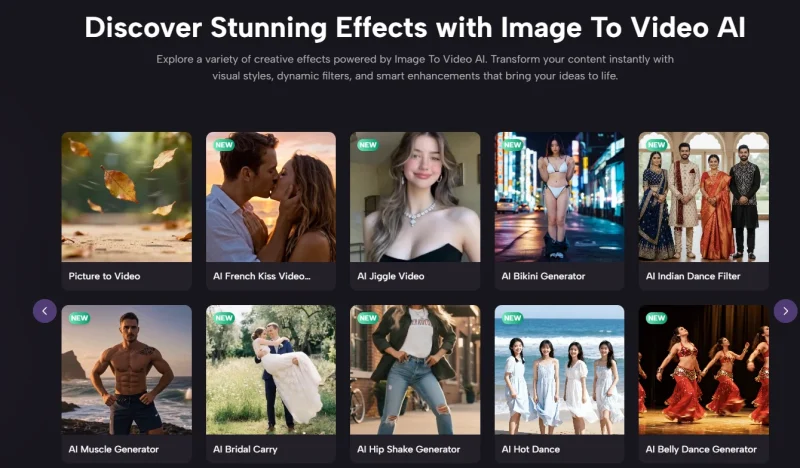

A Curated Selection Of Leading Platforms For Image Animation

The landscape of generative video is expanding rapidly, with several key players offering unique approaches to motion synthesis. Below is a list of prominent platforms that are currently defining the industry standards for converting images into high-quality video content.

- image2video.ai

- Runway Gen-2

- Pika Labs

- Luma Dream Machine

- Kling AI

- Sora by OpenAI

- Leonardo AI

- Kaiber AI

- Hailuo AI

- Stable Video Diffusion

The Core Implementation Process For Generating Dynamic Visual Content

Generating a video from a static source is a streamlined procedure designed for accessibility. Based on the official workflow, the process involves a few specific actions that ensure the AI understands the creative intent behind the transformation.

Step 1: Uploading High Resolution Source Media

The first step involves selecting a clear image in JPEG or PNG format. Starting with a high-quality source is essential because the AI uses the existing pixels as the foundation for every subsequent frame it generates.

Step 2: Defining Motion Through Natural Language Prompts

Once the image is staged, users provide a text description. This prompt guides the AI on what specific movements to perform, such as a camera pan, a character waving, or environmental changes like falling rain.

Step 3: Executing The AI Generation And Processing Phase

The system then enters the processing state. During this time, the model calculates the motion vectors and renders the five-second video sequence. In my tests, this typically takes around five minutes depending on server load.

Step 4: Finalizing The Output And Exporting High Quality Files

After the status changes to completed, the video is ready for review. Users can then download the resulting MP4 file to share across various digital platforms or incorporate it into larger video projects.

Comparative Analysis Of Modern Generative Video Performance Characteristics

To better understand how different tools handle the transition from stills to motion, it is helpful to look at the specific attributes that define a successful conversion. The following table highlights the primary factors that influence the final output quality.

| Performance Attribute | Entry Level Standard | Professional Tier Capability |

|---|---|---|

| Motion Consistency | Basic Frame Blending | Temporal Coherence and Stability |

| Output Duration | 2 to 3 Seconds | 5 to 10 Seconds |

| Resolution Support | 720p Standard Definition | 1080p or 4K Enhanced Resolution |

| Processing Speed | 10 to 15 Minutes | Under 5 Minutes |

| Prompt Adherence | General Interpretation | Precise Motion Control |

Expanding The Horizons Of Personal And Professional Storytelling

The practical applications for this technology extend far beyond simple entertainment. For content creators, the ability to turn a photo collection into a dynamic vlog or an engaging social media story is invaluable. It allows for a higher volume of content production without a proportional increase in effort. For example, a travel blogger can take a series of mountain photos and turn them into a sweeping aerial sequence that looks as though it was filmed with a professional drone.

Furthermore, the technology serves as a powerful tool for preserving history. By animating old family photographs, we can see ancestors move in a way that feels authentic, providing a sense of connection that history books cannot replicate. While the technology is impressive, it is important to acknowledge certain limitations. In my experience, the AI may occasionally struggle with complex human anatomy or intricate overlapping objects, sometimes requiring multiple attempts with different prompts to achieve the perfect look. Despite these minor hurdles, using Photo to Video workflows remains the most efficient way to generate high-impact visual assets.

Technical Nuances Of The Current Generation Video Models

The underlying technology often relies on models like Veo 3.1 or Seedance, which are designed to handle multiple reference points simultaneously. These models do not just “warp” the image; they essentially re-render the scene in three dimensions. This allows for realistic camera movements like zooming and tilting. In my testing, the stability of the background during these movements has improved significantly compared to earlier versions of generative AI, making the resulting videos appear much more like traditional film.

Industry research suggests that video content generates significantly higher engagement rates on social platforms compared to static images. By integrating AI-generated motion into a digital strategy, users can capture attention more effectively. The simplicity of the web-based interface means that no professional editing experience is required, allowing anyone with a creative vision to produce cinematic results. As the models continue to evolve, we can expect even longer generation times and higher levels of physical realism in every frame.

What is Image to Video AI?

Image to Video AI is a type of generative technology that turns a still image into a short video by adding motion, depth, and scene transitions.

It helps creators animate photos without filming new footage, making static visuals feel more dynamic and cinematic.

How does Image to Video AI work?

Most Image to Video AI tools start with a source image and a text prompt that describes the type of motion you want to see.

The model then generates new frames, simulates movement, and exports the result as a short video clip.

What can you use Image to Video AI for?

Image to Video AI can be used for social media content, product showcases, marketing visuals, travel memories, animated portraits, and short storytelling clips.

It is especially useful when you want more engaging visual content without a full video production setup.

Which tools support Image to Video AI generation?

Popular platforms in this space include Runway, Pika, Luma Dream Machine, Kling AI, Leonardo AI, Kaiber AI, and other emerging generative video tools.

Each platform differs in motion quality, prompt control, output length, and rendering speed.

Are there any limitations to Image to Video AI?

Yes, some results may look inconsistent when the source image contains complex anatomy, overlapping objects, or detailed background elements.

In many cases, better prompts and cleaner source images improve the final output significantly.

Andrej Fedek is the creator and one-person owner of three blogs: InterCool Studio, CareersMomentum, and Bettegi. As an experienced marketer, he is driven by turning leads into customers with White Hat SEO techniques. Besides being a boss, he is a real team player with a great sense of equality.