A surprising number of song ideas fail before they are ever judged on musical quality. They fail because they remain trapped as text. A hook sits in a notes app, a chorus lives in a draft folder, or a campaign concept includes a line that clearly wants music but never gets far enough to become sound. That is where an AI Music Generator becomes interesting. It gives written intention a faster route into audible form, which means people can react to the song idea instead of endlessly describing it.

This matters more than it first appears. In many creative workflows, delay changes perception. The longer an idea stays abstract, the easier it is to overestimate or underestimate its potential. Hearing an imperfect version today can be more useful than imagining a perfect version for two weeks. That practical shift is what makes lyric-driven music tools worth understanding seriously rather than dismissing as shortcuts.

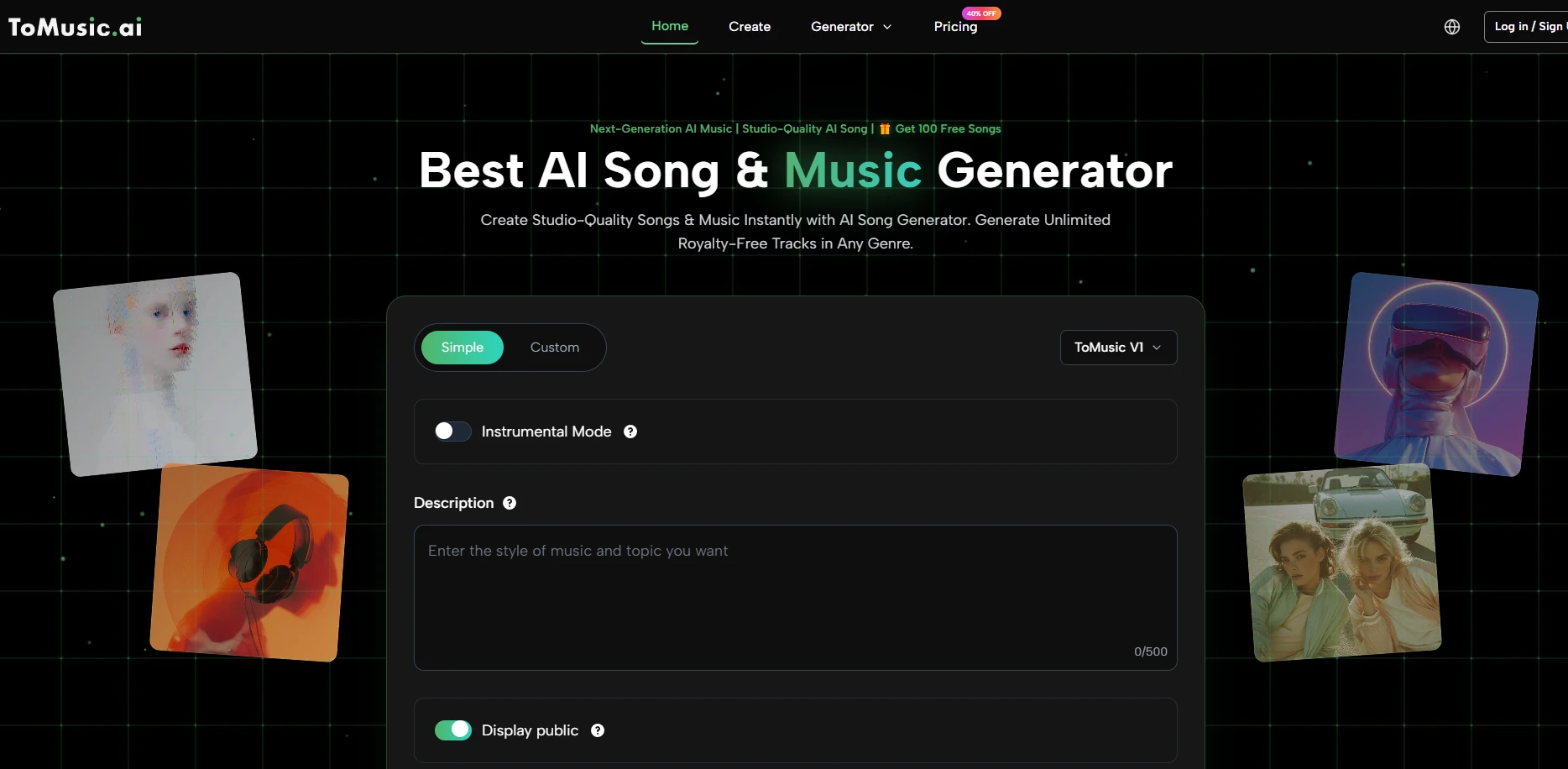

ToMusic is built around this kind of early conversion. Its official interface does not present music creation as one giant technical maze. Instead, it breaks the task into understandable decisions: choose a mode, define musical guidance, add lyrics if needed, and generate. That design choice suggests the platform is aimed at people who want meaningful control without being forced into the traditional complexity of production.

An AI Music Generator is a tool that uses artificial intelligence to turn text prompts, lyrics, and creative ideas into original music. It is commonly used to create songs, instrumentals, background audio, and fast music drafts for content, demos, or creative projects.

Table of Contents

- Why Lyrics Often Need Sound To Become Clear

- What ToMusic Makes Visible In The Process

- How Different Models Change User Expectations

- What Makes Lyrics Easier For The System To Handle

- A Three-Step Workflow For Better First Drafts

- Where This Kind Of Tool Can Be Most Useful

- What The Platform Does Not Solve Automatically

- Why The Platform Works Best As A Listening Device

Why Lyrics Often Need Sound To Become Clear

Lyrics can look strong on a screen and still fail musically. The reverse is also true. A line that seems plain in text can become emotionally persuasive once it has rhythm, melodic contour, and vocal attitude. This is one reason lyric-first generation deserves more attention.

That is where an AI Music Generator becomes especially useful. It gives written intention a faster route into audible form, allowing people to react to the song idea itself rather than endlessly describe what it might sound like.

Words alone do not reveal delivery choices

Pacing, stress, pause length, and tonal rise all influence whether a line feels flat or memorable. Until you hear those choices embodied in sound, you are often evaluating only half the material.

Musical framing can rescue uncertain writing

In my observation, not every successful AI-generated song begins with exceptional lyrics. Sometimes the writing is just competent, but the arrangement and performance frame the idea more convincingly than the raw text suggests. That does not excuse weak writing forever, but it does help creators discover where the real value of the concept may be hiding.

What ToMusic Makes Visible In The Process

The official creation page offers a useful clue about how the platform sees music generation. It includes fields for title, styles, genre, moods, voices, tempos, and lyrics, alongside mode selection and an instrumental option. That structure implies that the platform does not want the user to think only in broad prompts. It encourages a clearer song brief.

Title and styles shape the song identity

A title may look minor, but it creates conceptual focus. Style guidance then narrows the musical world the system should build around that focus. Even simple constraints can improve coherence.

Genre, mood, and voice act as interpretive signals

These fields matter because written lyrics do not explain everything. The same lyric can become intimate, triumphant, ironic, cinematic, or commercial depending on how the system interprets delivery. Giving mood and voice guidance helps reduce random drift.

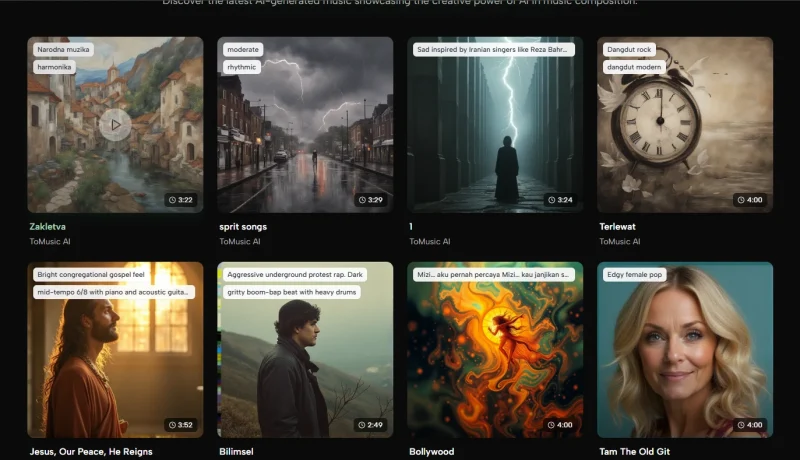

Instrumental mode expands the platform beyond songs

Although lyric generation is central, the instrumental option makes the platform relevant for background scoring, creator content, or rough audio moodboards. That broadens its value beyond conventional songwriting.

How Different Models Change User Expectations

The official pricing information suggests access to multiple models across plans, while the creation interface displays model selection. The site also describes the higher-tier models as better for vocals and more advanced musical behavior. That tells users something important: not every generation path is supposed to deliver the same kind of result.

This matters because many users make the mistake of blaming the whole tool for one weak result, when the actual issue may be that the request and the model were mismatched.

What Makes Lyrics Easier For The System To Handle

The official page notes support for structural tags such as verse, chorus, bridge, intro, and outro. That small detail is more useful than it sounds. Generative music systems generally respond better when they can infer structural function, not just word content.

Clear sections help the system distribute energy

A chorus wants lift. A verse often wants narrative or setup. A bridge typically benefits from contrast. Tags make those intentions easier for the system to interpret.

Repetition works better when it is deliberate

Some users try to avoid repetition because they assume it feels simplistic. In reality, repetition is one of the most musical aspects of songwriting. With lyric generation, a repeated phrase can give the model a clearer center of gravity.

Shorter clarity can beat longer ambition

A dense lyrical document is not always an advantage. In my testing of tools in this category, clean, sectioned writing with direct emotional cues often outperforms a long block of overworked text.

A Three-Step Workflow For Better First Drafts

The official product flow can be simplified into a three-step working method that stays true to what the platform actually shows on site.

Step 1. Build the song brief before writing everything

Choose Simple or Custom mode, decide whether you want vocals or instrumental output, and set the model path you want to test. Then define the title, styles, and musical guidance such as genre, mood, voice, and tempo.

Step 2. Add structured lyrics with only necessary detail

If the goal is a full song, place your lyrics in the dedicated field and organize them with natural sections. Avoid packing every possible idea into one draft. The point is to communicate a song shape, not a biography.

Step 3. Generate, compare, and refine the brief

Listen for alignment first. Is the tone correct? Does the vocal attitude suit the lyric? Does the pacing feel too flat or too dramatic? This is where Text to Music becomes a decision tool rather than just an output mechanism.

Where This Kind Of Tool Can Be Most Useful

One of the more mature ways to think about ToMusic is not as a replacement for musicianship but as a translator between language and early audio evidence.

Marketing teams can test emotional positioning

A slogan, campaign phrase, or ad concept may suggest several musical identities. Instead of debating those identities abstractly, a team can generate options and decide what actually supports the message.

Writers can hear whether lyrics deserve expansion

Many lyric drafts feel promising until they are sung. Others seem ordinary until melody reveals their strength. The platform helps users decide whether a text idea is worth developing further.

Creators can move from concept to content faster

For short-form content, speed has tactical value. A creator may not need a masterpiece. They may need a usable and tonally appropriate song direction while the content idea is still timely.

What The Platform Does Not Solve Automatically

It helps credibility to be clear about this: generation speed does not erase the need for taste, editing, or discernment. The platform can accelerate the process, but it cannot decide what is emotionally right for your project.

Prompt quality still shapes output quality

Vague or contradictory instructions can produce confused songs. If the result sounds unfocused, the first question should usually be whether the brief itself was too diffuse.

Not every lyric should become a song immediately

Some texts need rewriting before they deserve musical framing. The convenience of generation should not remove the discipline of revision.

Fast output can tempt premature approval

This may be the most common risk. When something sounds decent quickly, people sometimes stop at acceptable instead of pushing toward fitting. In serious use, the tool works best when users keep listening critically.

Why The Platform Works Best As A Listening Device

In the end, the real value of ToMusic may be less about automatic composition and more about accelerated listening. It lets creators hear what their words imply musically before those implications harden into larger production choices. That is a meaningful shift.

A song draft does not need to be final to be useful. It only needs to reveal whether the concept has life, where the emotional center sits, and what kind of revision would move it closer to the intended result. ToMusic’s official workflow supports that kind of use well because it keeps the steps manageable while still allowing lyric structure, style guidance, and model choice.

That combination gives the platform a practical place in modern creative work. It is not only for people chasing finished songs. It is also for people trying to hear sooner, decide sooner, and refine with more evidence than imagination alone can provide.

What does lyrics to song mean?

Lyrics to song describes the process of turning written words into something that can actually be heard as music.

That usually means adding melody, rhythm, vocal delivery, structure, and musical direction so the idea works beyond the page.

Why do lyrics sometimes sound better than they read?

A line can feel ordinary in text but become much stronger once rhythm, melody, phrasing, and vocal tone are added.

Sound reveals timing, emphasis, and emotion in ways that plain written lyrics cannot fully show on their own.

Can AI help turn lyrics into a song draft?

Yes, AI tools can help turn lyrics into an early song draft by adding musical style, mood, tempo, and vocal interpretation.

That makes it easier to judge whether the idea has potential before spending more time refining or producing it further.

What makes lyrics easier to turn into music?

Clear structure usually helps the most, especially when lyrics are organized into sections such as verse, chorus, and bridge.

Simple phrasing, deliberate repetition, and a strong emotional direction also make it easier for an idea to take musical shape.

Is hearing a draft better than only reading lyrics?

In many cases, yes, because hearing a draft gives you faster feedback on pacing, emotion, and whether the idea actually works.

Even an imperfect audio version can be more useful than spending too long imagining how the finished song might sound.

Andrej Fedek is the creator and one-person owner of three blogs: InterCool Studio, CareersMomentum, and Bettegi. As an experienced marketer, he is driven by turning leads into customers with White Hat SEO techniques. Besides being a boss, he is a real team player with a great sense of equality.